Automated tests: Why? How they help? Who needs them?

There are many types of automated tests out there. Let’s see the most used types of tests and understand how each one is useful.

Types of tests covered are:

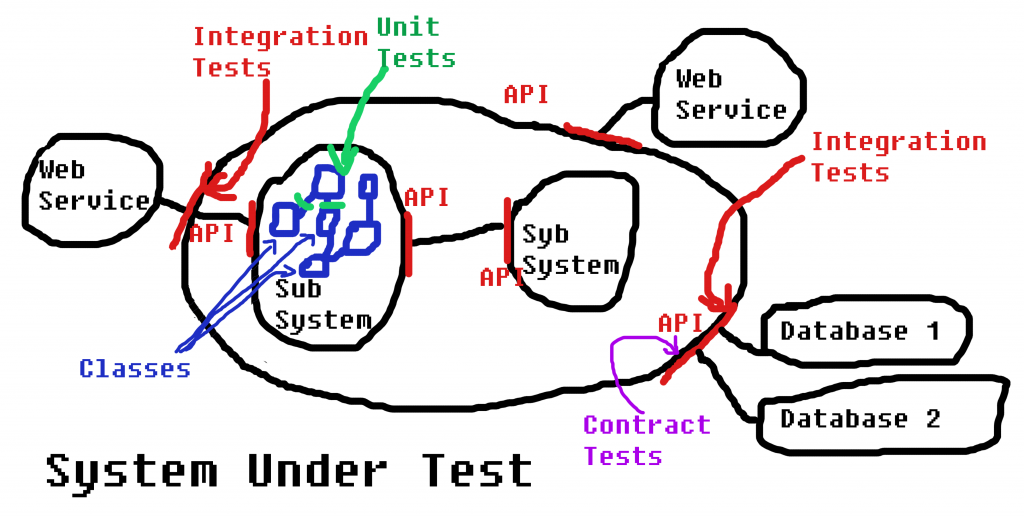

- Unit Tests are isolated, focused on methods and classes. White box tests.

- Integration Tests are for checking how two different modules integrate. Black box tests.

- Integrated Tests are big, large tests showing how many modules integrate, with a business purpose. Black box tests.

- Acceptance Tests are showing that a features works well. Black box tests.

- Contract Tests are a special type of tests, that verify polymorphism integration of multiple components or classes.

Let’s take them one by one in detail.

-

Unit Tests

Programmers usually write these tests. But testers should learn how to write unit tests too. You can write them after the production code (test after), or before the production code (test first). When writing them after the production code you need to take care that the production code is testable, so a phase of design and code review with testability in mind is essential.

An unit test is focused on a method or a class. It should be very small, a few lines of code the most. There are many ways one can make mistakes when writing unit tests, so it is not a trivial thing. Because they are small they should run in memory and a unit test should run in miliseconds. Any test that touches external dependencies (database, webservice, file system, any I/O) is not a unit test, it is something else (integration test, integrated test, acceptance test, end-to-end test, etc).

The unit tests results are first of all interesting for the technical team (programmers, testers, operations, devops, etc).

-

Integration Tests

There are many definitions of integration tests. For me an integration test is a test that checks the integration between two modules. If you have more than two modules then we have an integrated test, acceptance test or end-to-end test.

These tests are usually written by programmers. But often testers write them as well.

If you have component teams, it is a good practice to start with the API of each module and then write the integration tests. This approach is equivalent to the test-first approach with unit tests. You can also write them after the code was written, but again you need to have in mind testability when writing the APIs of the modules and the production code of each module.

Good practices like Dependency Inversion, Interface Segregation, Single Responsibility are important to have in mind in order to have efficient integration tests.

Most often I use integration tests to check how an external module (web service, database, etc) integrates with the system. They are also useful to check if connections to external systems I don’t own function well.The integration tests are interesting also for the technical team, as with unit tests.

-

Integrated Tests

When you want to test more than two modules integrating together, or on a whole system you have integrated tests.

These tests are brittle, are slow, require many resources and not recommendable. Often teams spend more time on fixing these types of tests than using them to assess the health of the system.

They have an apparent advantage of testing the whole system, but that is a trap. You need too many of them, usually for a medium-sized system you need too many to be feasible.You can read why JB Rainsberger says Integrated Tests are a Scam. They are used too much and too often and teams who start adding automated tests consider this is the only way.

-

Acceptance Tests

These are usually written by testers and they validate a certain feature of the system. Strange enough most of people refer only to Acceptance Tests when they talk about automated tests.

We can have acceptance tests at module level, across modules, at system level, across systems, etc. If you have an acceptance test across modules or across systems (focusing on more than two modules), then they are integrated tests. For example any test that will access a behavior through a GUI on top of another module is an integrated test. These tests are brittle, they depend on the GUI. They are not advisable.

These tests are interesting first of all for the product people, for the sponsors, secondly for the testers and only thirdly for the technical team.

-

Contract Tests

They will check if all the implementations of the same interface behave the same. In other words, they are a practical way of making sure Liskov Substitution Principle is respected.

Let’s say we need two databases for a system. The user can choose which database to use, and we need to provide both of them. In order to make sure that the Data Layer (the interface) is implemented in the same way by both databases, we can write a few contract tests.

These tests are interesting for programmers, but also architects might use them to understand better how extensible a system is.

All of these tests can be used to generate executable specifications and test reporting depending on the audience, determined by the four testing quadrants. Also all of them can be used to identify regressions in the future.

If you want to learn more about the subject come to European Testing Conference 2017 and let’s chat about it. Until then feel free to ask me anything about this topic.

Thank you very much ! You have cleared out the difference between them.